I decided to take a month off from client projets, so I could work on subjects which I don’t usually have the time to work on.

I decided to take a month off from client projets, so I could work on subjects which I don’t usually have the time to work on.

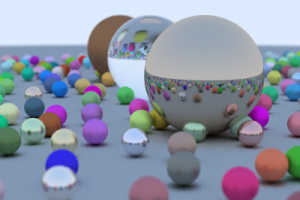

Since I began learning Haskell lately, I knew that I had to code a real project. Actually, I think it’s the only way to study programming seriously: stick to a problem and find ways to attack it. A Raytracer looked like a reasonable idea for a project, since Raytracers use recursivity, which is the specialty of functional languages like Haskell.

Anyway, to sum up: I began with Haskell, and I ended with Swift. I met some difficulties regarding pseudo-random numbers in Haskell, I was tired and I was not sure about what was wrong (this was my first raytracer). I have not really given up, just passed on to keep my motivation.

The result is two projects:

https://github.com/Ceroce/HaskellRay

https://github.com/Ceroce/SwiftRay

SwiftRay

I had no precise idea on how to code a raytracer, so I followed an e-book, Ray Tracing in One Weekend by Peter Shirley.

The original source code was written in C++. I was asked if porting it to Swift had been hard: Not really. Sometimes it was difficult to follow the C++ code, because of the way it is written, but Swift is way more elegant than C++, and has all required features — in particular operators overloading, which are more than useful when working with vectors.

The second question was how it performs, compared to C++. I don’t know, since I have not measured. I don’t care really, and that was not an aim for my experiment. All I can say is that it’s about the speed I expected: very slow. Shirley uses a “Pathtracer” algorithm. Wikipedia says that this is a characteristic of these raytracers. It already takes hours to render with 100 rays by pixel (112 minutes on my Mac for the very small and simple image above), and the image is still very noisy. At least 500 rays would be needed!

Currently the program uses a single thread. The obvious next step is therefore to parallelize the work so I can use all 4 cores of my Mac. Since I mostly used Structs (≃ immutable objects), it should be easy. I let you know when I find the time…